If You Can Describe It, You Shouldn’t Be Building It

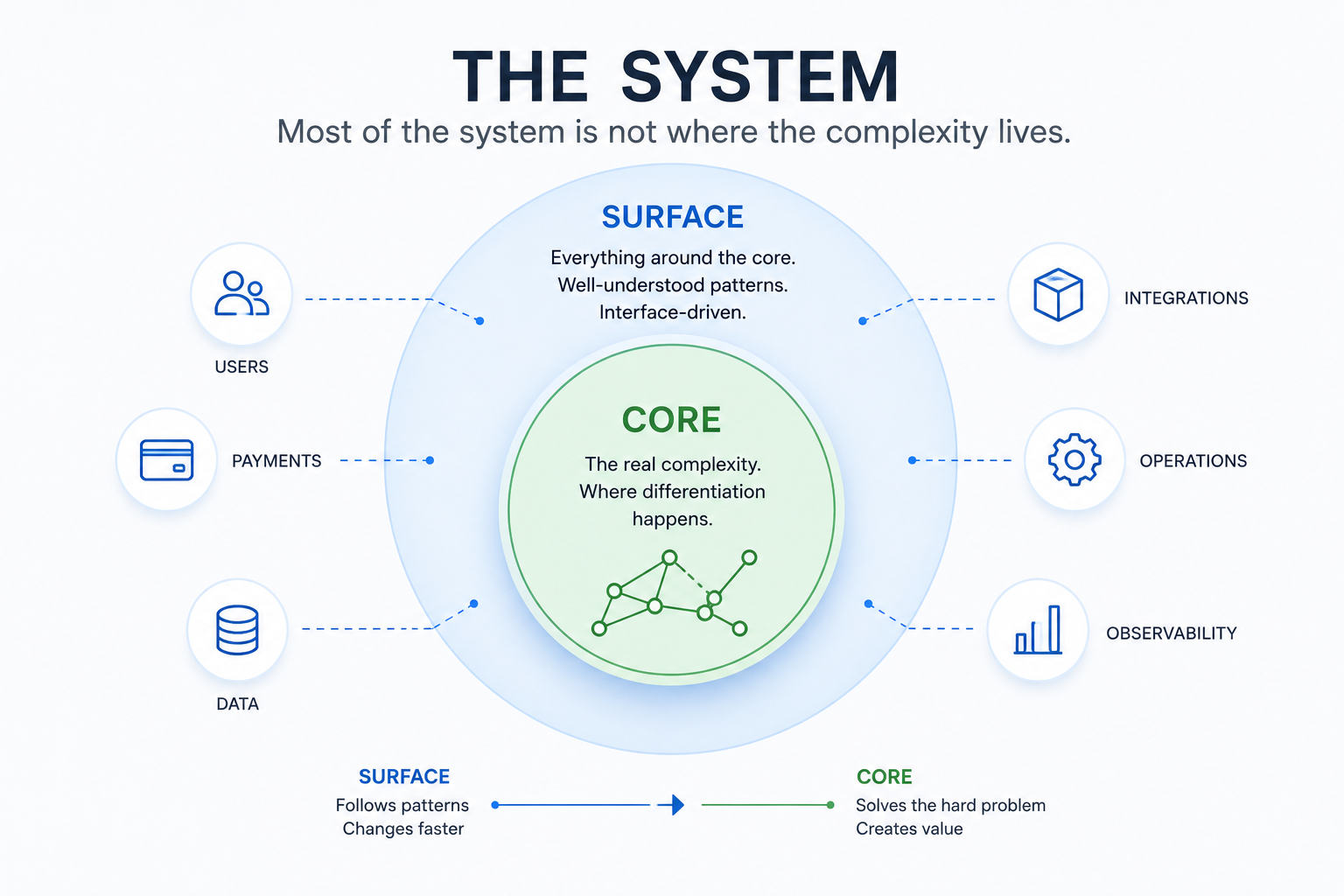

May 12, 2026Most complex systems are built around a hard, algorithmic core, but that’s not where most engineering time goes. Instead, teams keep re-implementing well-understood patterns like booking, payments, and system integration. With agentic AI, this balance shifts and the real challenge becomes understanding the system, not building it.

How agentic AI shifts engineering from implementation to understanding

If I had to build a large-scale system today — one that continuously assigns incoming requests to a set of limited resources — I wouldn’t start with microservices, dashboards, or even infrastructure.

I would start with a different question:

What is actually hard about this system — and what is not?

Because when working on systems like this, one thing becomes clear very quickly:

Most of the system is not where the complexity lives.

The Core Problem

At its heart, the problem can be described simply:

Assign incoming requests to a set of resources while minimizing a global objective.

That could mean coordinating vehicles, scheduling jobs, or allocating compute workloads. The abstraction doesn’t really matter.

What matters is what happens when this problem leaves the whiteboard.

In reality, the system operates under constraints that fundamentally change its nature:

- decisions must be made in real time

- the solution space is too large for exact optimization

- commitments are made externally and must be honored

- demand fluctuates unpredictably

- the environment changes continuously

- system state is never static

This is not a typical backend problem.

It is algorithm engineering under real-world constraints.

And that is where the actual differentiation happens.

Everything Around the Core

And once you understand where the real complexity lies, something else becomes obvious:

most of the system is not part of that complexity at all.

Surrounding the core is a large system of supporting capabilities:

- booking and request handling

- payment processing

- identity and access handling

- resource tracking

- operational tooling

- monitoring and observability

These parts are essential — but they are not where your system differentiates.

They follow established patterns, are largely interface-driven, and have been implemented countless times across industries.

They are no longer where systems differentiate.

Where Agentic AI Changes the Game

And this is exactly the kind of structure modern AI systems are exceptionally good at working with.

Not because these parts are trivial — but because they are well-structured.

Modern AI systems are very effective at:

- generating API layers from interface definitions

- building internal tools and dashboards

- wiring services together

- implementing standard error handling and edge cases

In other words:

AI is very effective at building the surface area of the system.

That changes the economics.

At that point, continuing to build these systems manually becomes increasingly hard to justify.

But it struggles with the core:

- designing optimization algorithms under constraints

- balancing heuristics against latency requirements

- reasoning about non-linear system behavior

- translating real-world trade-offs into formal objectives

That boundary matters.

Why the Core Resists Automation

The difficulty is not just that the core problem is complex.

It’s that it behaves fundamentally differently from the rest of the system.

First, the solution space is not well represented in training data. While there are research papers, there is comparatively little real-world, production-grade code — and even less capturing why certain decisions were made.

Second, the system is highly non-linear. Small parameter changes can have large, unpredictable effects. Local improvements can degrade global behavior. Outcomes only emerge at scale.

But most importantly:

the system is defined by trade-offs, not by a single objective.

For example:

tighter constraints improve the experience for successful outcomes

but reduce the number of successful outcomes overall

increasing efficiency improves system utilization

but can reduce reliability or flexibility

There is no single “correct” solution.

The real challenge is deciding what “optimal” even means.

And that decision is not purely technical — it is shaped by product, business, and operational considerations.

We Are Still Handwriting the Wrong Software

If the core is defined by complex trade-offs, the surrounding system is not.

And yet, a large portion of engineering effort is still spent there.

Looking back, a significant amount of time goes into areas like:

- payment integration

- booking flows

- API layers derived from interface definitions

- error handling and edge case management

These parts are essential — but they follow well-understood patterns.

Take payments as an example.

There is very little differentiation in implementing payment systems today. The challenges are mostly around integration, compliance, and reliability — all of which follow established approaches and are often supported by external providers.

And yet, teams still spend significant effort wiring these systems together manually.

The same is true, to a slightly lesser extent, for booking.

While the exact flow may differ depending on the domain, it can still be described precisely:

- what input state is required

- what transitions are allowed

- what constitutes success

- what defines failure

For example:

- a booking is only valid if capacity is reserved and payment is authorized

- a booking fails if no capacity is available

- a booking is invalid if payment authorization is declined

These are not vague requirements.

They are explicit system specifications.

And that raises an uncomfortable question:

If a system can be fully described in terms of states, transitions, invariants, and error conditions — why are we still implementing it manually?

The Real Shift

If the hard part of the system is defining constraints and trade-offs — and the rest can increasingly be generated — then the nature of engineering work changes.

The shift is not that AI writes code faster.

It’s this:

The bottleneck moves from implementing systems to defining them correctly.

Instead of focusing on:

- writing services

- wiring systems together

the focus shifts toward:

- defining constraints

- specifying behavior

- shaping the objective function

Teams that internalize this shift will build faster and with smaller teams. Teams that don’t will find themselves optimizing the least important parts of their systems.

The Real Risk Isn’t AI — It’s Misunderstanding the System

And this leads to a more subtle risk.

It’s easy to focus on the visible parts of building software:

- clean architectures

- well-structured APIs

- service boundaries

- implementation details

These things matter.

But in systems with an algorithmic core, they are not the hard part.

The hard part is understanding:

- what the system is actually optimizing

- what trade-offs are being made

- what constraints are non-negotiable

- what invariants must never be violated

Without that understanding, everything else becomes guesswork.

AI Doesn’t Remove This Responsibility

If anything, modern AI makes this more critical.

Because once implementation becomes cheap, the limiting factor is no longer writing code.

It is:

defining the system correctly in the first place.

That’s the real shift.

You can have the best tools, the most advanced models, or an entire system of agents generating code for you.

But if you don’t understand:

- what your system should do

- why it should behave that way

- and what trade-offs you are making

then you’re not building a system.

If you don’t understand the system, you’re not building it — you’re just producing software.